The Claude Code Leak Showed Me What I Was Configuring Wrong

What Anthropic's own code says about how you should actually configure your setup.

Claude Code's source leaked this week. The full internal codebase. Coordinator module, memory system, skill loader, hooks engine, permission resolver. All of it.

I spent days reading through it. Not to analyze the architecture. To find out what I should be doing differently.

Here's what I found: most of us are using Claude Code wrong. Not broken-wrong. Suboptimal-wrong. The kind of wrong where everything works but you're leaving half the tool's capability untouched.

The leaked code has a multi-stage compaction system most users never trigger. A semantic memory ranker that calls Sonnet separately on every query to pick which memories to surface. A hooks engine that fires even in YOLO mode. And a skill discovery system that most people's skills never activate because the frontmatter is missing.

I made 5 changes to my setup after reading the source. Each took under 30 minutes. Here's what they are and why the code says they matter.

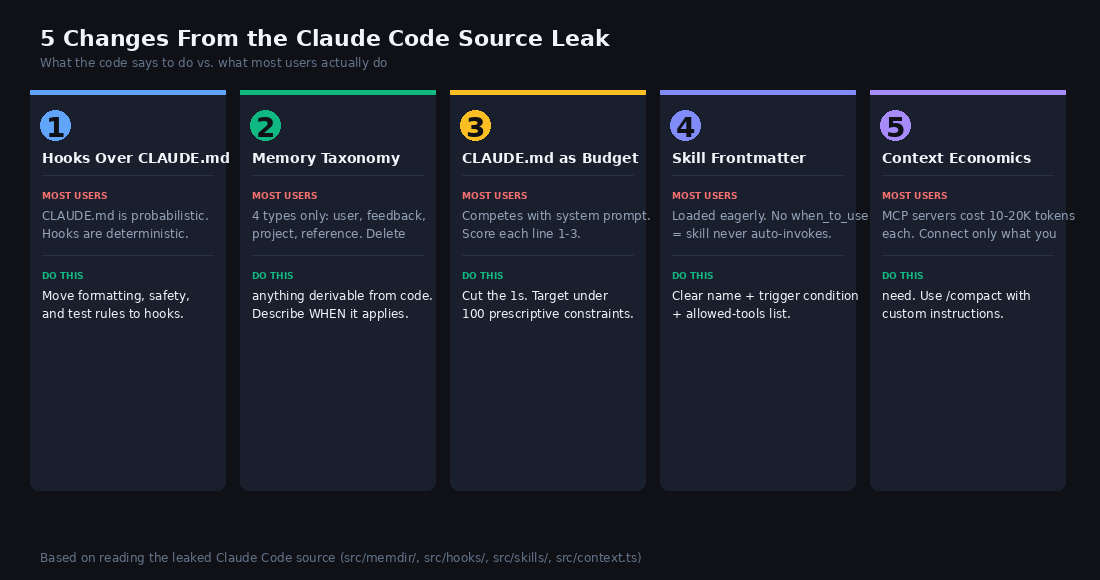

1. Stop Putting Rules in CLAUDE.md. Use Hooks.

In my experience, this is the most common misconfiguration.

CLAUDE.md is probabilistic. The model reads your instructions, tries to follow them, sometimes forgets mid-conversation. The leaked code shows why. Your CLAUDE.md shares an instruction budget with the system prompt itself. The system prompt already ships with dozens of built-in instructions. Tool definitions, safety rules, output formatting, git protocols. Your CLAUDE.md competes for the same attention window. The more instructions you add, the less reliably any individual instruction gets followed.

Most people write 300-line CLAUDE.md files. The longer the file, the more each individual rule gets diluted. Every line you add makes every other line less likely to be followed.

Hooks are different. They're deterministic. A PreToolUse hook that blocks `rm -rf` will always block it. Every single time. Even if you're running with `--dangerously-skip-permissions`. Even if the model "forgot" the rule. A CLAUDE.md line saying "never run rm -rf" will work most of the time. But not all of the time.

The code makes this distinction deliberately. The internal hooks system has a full async registry with timeout protection, progress streaming, and ordered execution. This isn't an afterthought. It's the primary enforcement layer for anything that must always happen.

The rule: If it must always happen, make it a hook. If it should usually happen, put it in CLAUDE.md.

Here's what should move out of CLAUDE.md into hooks:

Code formatting. A PostToolUse hook runs Prettier or Black after every edit. Deterministic. No model cooperation needed.

{

"hooks": {

"PostToolUse": [

{

"matcher": "Edit|Write",

"hooks": [{ "type": "command", "command": "npx prettier --write \"$FILE_PATH\"" }]

}

]

}

}Dangerous command blocking. A PreToolUse hook with exit code 2 blocks execution before it starts.

Test running. PostToolUse hook that runs relevant tests after file changes.

Quality checks. I run an AI-marker detection script after every write. 170 lines that check for 70+ AI-marker phrases. If it finds one, the hook flags it. This never lived in CLAUDE.md. It couldn't. You can't probabilistically enforce a 70-item blocklist.

Moving these rules from CLAUDE.md to hooks cut my instruction file from 200+ lines to under 80. The rules that moved went from "usually followed" to "always followed."

The Other 4 Changes

The hooks insight was the biggest. But four other patterns from the leaked code changed how I think about my setup.

Memory taxonomy. The code enforces a strict 4-type system (user, feedback, project, reference) and explicitly excludes anything derivable from code. I was saving architecture notes and file paths. Wasted tokens. Stale within days.

CLAUDE.md as a budget. Not a document. Not a README. A budget of ~100 prescriptive constraints. I audited every line and cut 60%.

Skill frontmatter. Only frontmatter fields get surfaced to the model for discovery. The body content only enters context on invocation. My skills had vague names and no `when_to_use` field. They never got auto-invoked. Dead skills sitting in a directory.

Context economics. Each MCP server injects tool definitions into your context. Some cost 15,000+ tokens. Connect 5 servers and you've burned 60,000 tokens before typing your first message. The leaked code has a multi-stage compaction system to manage this. Most users never trigger full compaction deliberately.

Each of these has a specific implementation recipe that takes under 30 minutes.

The rest of this article is for paid subscribers. You'll get:

• The complete implementation recipe for all 5 changes, with starter configs you can copy today

• The memory template with Anthropic's 4-type taxonomy and examples of descriptions that actually trigger Sonnet's selection

• The CLAUDE.md audit checklist (score your file, identify what to cut, what to move to hooks, what to move to memory)

• The skill frontmatter template that makes skills actually get discovered and invoked

• The context budget breakdown showing what's eating your tokens and when to disconnect